Understanding Entropy

Measuring Randomness

Entropy is fundamentally a measure of randomness and unpredictability, measured in bits. A strong cryptographic key requires roughly 128 to 256 bits of pure entropy.

If humans choose passwords without a true random generator, the actual entropy is drastically lower due to predictable human behavior, highlighting why key derivation functions and large nonces are critical.

Information Theory

Claude Shannon's Information Theory models entropy as the level of 'surprise' or uncertainty in a variable. Security systems aggressively seek maximum entropy to guarantee that attackers cannot trim the search space using heuristic learning engines or linguistic probabilities.

Everyday Example

Entropy is essentially the measure of 'surprise'. If I tell you today is Monday, the fact that tomorrow is Tuesday has zero entropy (no surprise). If I flip a fair coin a hundred times, the exact sequence of heads/tails contains extremely high entropy because it is wildly surprising and impossible to predict ahead of time.

The Deep Mathematics

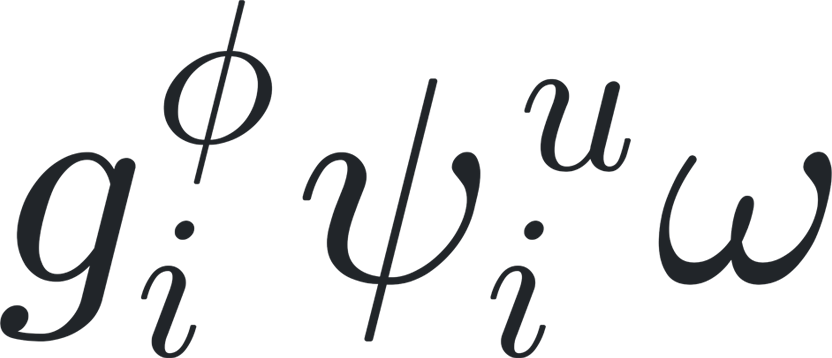

Shannon Entropy is defined mathematically as H(X) = - Σ [P(x) * log2(P(x))], mapping the discrete probability distributions of state variables. In modern cryptography, a high-entropy key achieves maximum positional uniformity, rendering algebraic algebraic-geometry attacks and side-channel probability heuristics statistically null.

Discover how giovium protects your data

giovium leverages these very cryptographic principles to keep your passwords, files, and secrets completely safe. Try it for free on any platform.

Download giovium